Thank you to everyone who joined us. The recording from our webinar is now available below.

About this session

On 30 April, Precision Development (PxD) hosted a webinar bringing together practitioners from PxD, Youth Impact and The Agency Fund to explore what it really takes to build a culture of iterative learning within a development organisation.

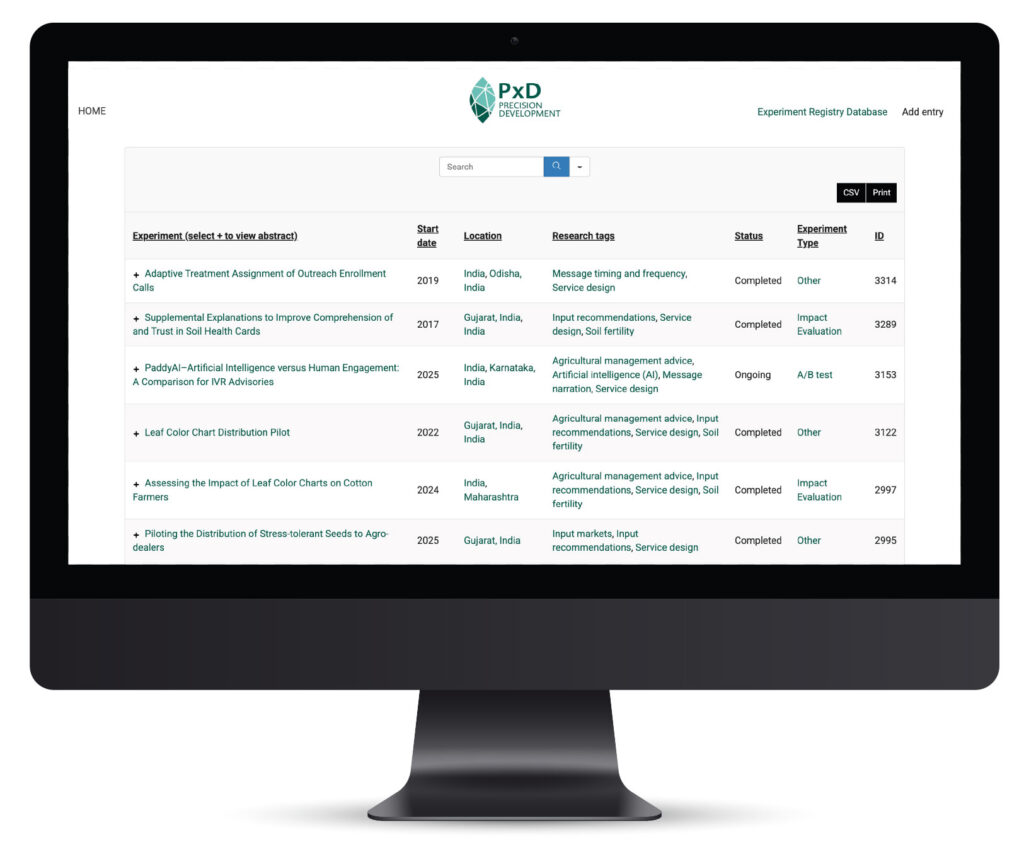

The session introduced PxD’s Experiment Registry — an open platform documenting the experiments behind our programmes — and featured candid conversations about how to embed a testing mindset across teams, partners and geographies.

Speakers:

Niriksha Shetty, CEO, Precision Development (PxD) — introduction and welcome

Elia Gandolfi, The Agency Fund — Evidential and how organisations are using it

Noam Angrist, Youth Impact — operationalising experimentation at scale

Tomoko Harigaya, PxD — the Experiment Registry in practice

This year, PxD marks 10 years of driving scalable impact at the intersection of technology, evidence, and farmer-centred solutions. It’s a moment to reflect on how far we’ve come and to share the tools and approaches, like the registry, that will carry our work forward.

Questions from the audience

- fit: is about the use cases, metrcs and target users. commercial platforms are made for optimizing click-through rates of tech literate shopper on ecommerce sites.

channels is about integrations. E.f. If my orgs delivers program through turn.io, I need a big engineering overhead to plug in an ab testing platform, while evidential does it in 3 clicks - A/B testing is only one of several methods to answer design questions.

Rapid experimentation at scale requires low cost means to collect/observe immediate outcomes. This is harder for some services than others. For instance, we can’t observe real-time engagement in SMS messages (whether a farmer opens the message or reads the message), or for a radio campaign without farmer database, it’s hard to identify farmers to follow up with at low cost.

Experimentation only works for design elements that can be simontaneously varied across target users. Design questions around operational and system-wide processes and infrastructure can be difficult to be A/B tested. - This has been a challenge. We had many insights we sat on that were not socialized /informing decisions. Here are a couple of things that have helped at PxD:

(i) key insights and new findings periodically synthesized and shared across the organization through multiple channels

(ii) design process where we explicitly discuss how past insights are incorporated into design decisions (and which design questions we want to test further)

But we’re still very much figuring out how to improve the way we work. - Yes, we find the following helpful: 1) starting with questions counterparts actually care about, 2) improving their existing processes in ways that reduce burden (e.g., digitizing data collection in government call centers), and 3) creating low-cost forums to act on insights, such as monthly senior leadership meetings to review insights and actionable recommendations (e.g., PxD’s work with KALRO) or collaborative experimentation where a technical partner leads analysis but the government is engaged throughout (e.g., PxD’s A/B testing with ATI).

The Agency Fund’s accelerator model offers a strong template for communities of practice, with real potential to replicate this at the country level so learning stays rooted in local context and remains actionable for local implementers. - We think funders can accelerate A/B testing adoption in three ways.

1) Reduce the cost and risk by covering full experiment costs — including staff time for analysis — and supporting the systems and processes organizations need to run them.

2) Signal that lightweight-but-rigorous evidence is valued. A/B testing complements both descriptive monitoring data and full RCTs, offering a credible and lower-cost path to generating experimental evidence. Recognizing a well-designed comparison group as meaningful progress, along with the right technical support, could unlock experimentation for organizations that may find the leap from monitoring outputs to full-scale RCTs out of reach.

3) Play a convening role by creating spaces for organizations to share findings and improve service design collectively, so insights benefit the whole sector rather than staying siloed. - This depends on the type of program and the context – it has not been a huge issue, but its important to prepare in advance of when the season arrives, priming farmers to expect information – we don’t see huge drop-offs season to season, and then in some cases, we would provide supplemental info (for farmers doing minor season crops, or information on preparation, weather, or off-season activities).

- Not too soon. Often times the earlier you build the habit of testing, the better. We think carefully about matching our question to our sample size: with a smaller pilot, focus on questions where even directional signals are useful, or where we’d expect large differences between variants. We save tests that require detecting subtle differences for when we have more volume.

- We don’t do holdouts when running A/B tests to ensure they are fully integrated into ongoing scale-up activities. However, we occasionally include controls groups at key junctures.

- building the good data flows (turning raw data into metrics) needs to happen before. Evidential takes those metrics and run experiments on top

Stay Updated with Our Newsletter

Make an Impact Today